PySpark Filter Functions of Filter in PySpark with Examples

Method 2: Using filter and SQL Col. Here we are going to use the SQL col function, this function refers the column name of the dataframe with dataframe_object.col. Syntax: Dataframe_obj.col (column_name). Where, Column_name is refers to the column name of dataframe. Example 1: Filter column with a single condition.

PySpark Machine Learning An Introduction TechQlik

2 Answers Sorted by: 5 You could create a regex pattern that fits all your desired patterns: list_desired_patterns = ["ABC", "JFK"] regex_pattern = "|".join (list_desired_patterns) Then apply the rlike Column method: filtered_sdf = sdf.filter ( spark_fns.col ("String").rlike (regex_pattern) )

How to use filter condition in pyspark BeginnersBug

🔶 PySpark Solution - https://lnkd.in/dgg7HUSN Follow Ankur DHAKA for more Data Engineering posts. #sql #dataengineering #pyspark #sparksql #interviewquestions #postgresql #spark #dataengineer

zipfian/buildingsparkapplicationslivelessons Gitter

Filtering a pyspark dataframe using isin by exclusion [duplicate] Ask Question Asked 6 years, 11 months ago Modified 5 years, 5 months ago Viewed 195k times 52 This question already has answers here : Pyspark dataframe operator "IS NOT IN" (8 answers) Closed 4 years ago.

Pyspark filter operation Pyspark tutorial for beginners Tutorial 4 YouTube

🔶 PySpark Solution - https://lnkd.in/dgg7HUSN Follow Ankur DHAKA for more Data Engineering posts. #sql #dataengineering #pyspark #sparksql #interviewquestions #postgresql #spark #dataengineer

What is PySpark Filter OverView of PySpark Filter

Method 1: Using filter () filter (): This clause is used to check the condition and give the results, Both are similar Syntax: dataframe.filter (condition) Example 1: Get the particular ID's with filter () clause Python3 dataframe.filter( (dataframe.ID).isin ( [1,2,3])).show () Output: Example 2: Get names from dataframe columns. Python3

SPARK (PYSPARK) 2 (FILTROS 2) ISIN YouTube

In Spark/Pyspark, the filtering DataFrame using values from a list is a transformation operation that is used to select a subset of rows based on a specific condition. The function returns a new DataFrame that contains only the rows that satisfy the condition.

filter使用 pyspark filter在python中的用法_西门吹雪的技术博客_51CTO博客

Solution: Using isin () & NOT isin () Operator In Spark use isin () function of Column class to check if a column value of DataFrame exists/contains in a list of string values. Let's see with an example. Below example filter the rows language column value present in ' Java ' & ' Scala '.

Tutorial 4 Pyspark With PythonPyspark DataFrames Filter Operations YouTube

PySpark December 8, 2022 6 mins read PySpark isin () or IN operator is used to check/filter if the DataFrame values are exists/contains in the list of values. isin () is a function of Column class which returns a boolean value True if the value of the expression is contained by the evaluated values of the arguments.

pyspark filter corrupted records Interview tips YouTube

3 Answers Sorted by: 5 If both dataframes are big, you should consider using an inner join which will work as a filter: First let's create a dataframe containing the order IDs we want to keep: orderid_df = orddata.select (orddata.ORDER_ID.alias ("ORDValue")).distinct () Now let's join it with our actdataall dataframe:

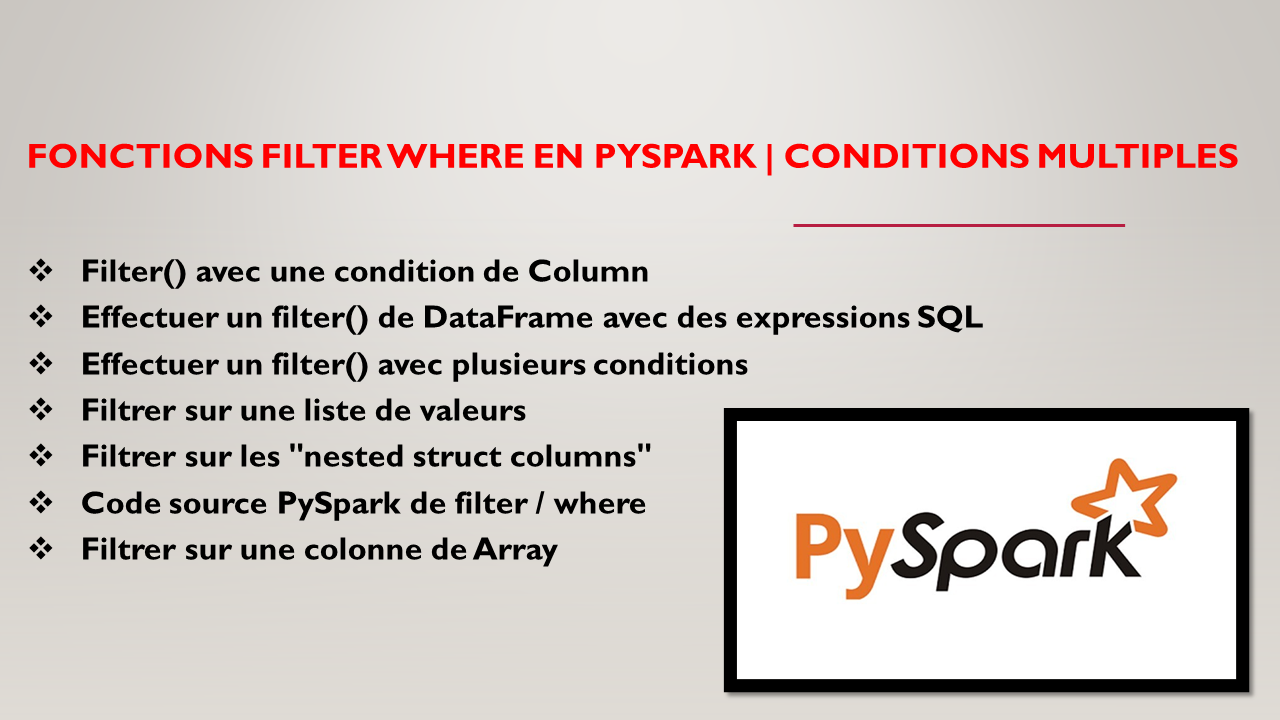

Fonctions filter where en PySpark Conditions Multiples Spark By {Examples}

PySpark Filter on Dataframe Example 1: Filtering with Multiple Conditions Example 2: Filtering with LIKE Example 3: Filtering with IN Example 4: Filtering with NOT Example 5: Filtering with Regular Expressions Example 6: Filtering with a Custom Function Conclusion References 1. Official Apache Spark Documentation - DataFrame: 2.

PySpark Unit Test Best Practices Le blog de Cellenza

1. Filter DataFrame Column contains () in a String. The PySpark contains () method checks whether a DataFrame column string contains a string specified as an argument (matches on part of the string). Returns true if the string exists and false if not. Below example returns, all rows from DataFrame that contain string Smith on the full_name.

PySpark NOT isin() or IS NOT IN Operator Spark By {Examples}

The isin function is part of the DataFrame API and allows us to filter rows in a DataFrame based on whether a column's value is in a specified list. It's akin to the IN SQL operator, which checks if a value exists within a list of values.

pyspark select/filter statement both not working Stack Overflow

Using IN Operator or isin Function. Let us understand how to use IN operator while filtering data using a column against multiple values. It is alternative for Boolean OR where single column is compared with multiple values using equal condition. Let us start spark context for this Notebook so that we can execute the code provided.

PySpark Transformations and Actions show, count, collect, distinct, withColumn, filter

How to filter using isin from another pyspark dataframe Ask Question Asked Modified Viewed 672 times 1 df1 has a lot of data, I want to filter that has id that avaliable in df2. Here's what I did df1.filter (col ('id').isin (df2.select ('id'))) Here's the error message,

Transforming Big Data The Power of PySpark Filter for Efficient Processing

The NOT IN condition (sometimes called the NOT Operator) is used to negate a condition of isin () result. 1. Quick Examples of Using NOT IN Following are quick examples of how to use the NOT IN operator to filter rows from DataFrame.